Evidence-informed policymaking (EIPM) is a critical pathway to more effective policies, programmes, and practices. EIPM is supported through interventions such as capacity-building, evidence platforms, and evidence-to-policy communities of practice. Such efforts to improve the use of research evidence are happening around the globe to benefit socio-economic development by connecting research with policy and practice. Yet despite sustained investment in EIPM initiatives, the global evidence on what works to strengthen evidence use has remained fragmented, without a consolidated knowledge base on the learning and evaluation of EIPM efforts globally.

The Art and Science of Promoting Evidence-Informed Policymaking: A Global Living Evidence Map was developed to address this gap by collecting and organising the available evidence using systematic review methods across scientific and grey literature. Since 2017, the Pan-African Collective for Evidence have worked to keep this evidence base both rigorous and practical: a living resource that helps researchers, funders, and implementers quickly see what is known and what still needs to be tested. The map currently includes 940 studies identified from 139,520 citations, including some William T. Grant Foundation-funded studies on improving the use of research evidence.

This blog post provides an overview of the evidence map, highlighting what it includes, how to read it effectively, and what gaps remain.

How to read the evidence map

Evidence maps provide a visual overview of what evidence exists, how it is distributed, and where gaps remain, helping users locate studies quickly and see concentrations at a glance. A static map provides a snapshot; a living map is designed to stay useful as the field evolves. In our current practice, the map is updated on a quarterly cadence, supplemented by ongoing engagement across evidence-use organisations to identify relevant published and grey literature that standard database searching can miss.

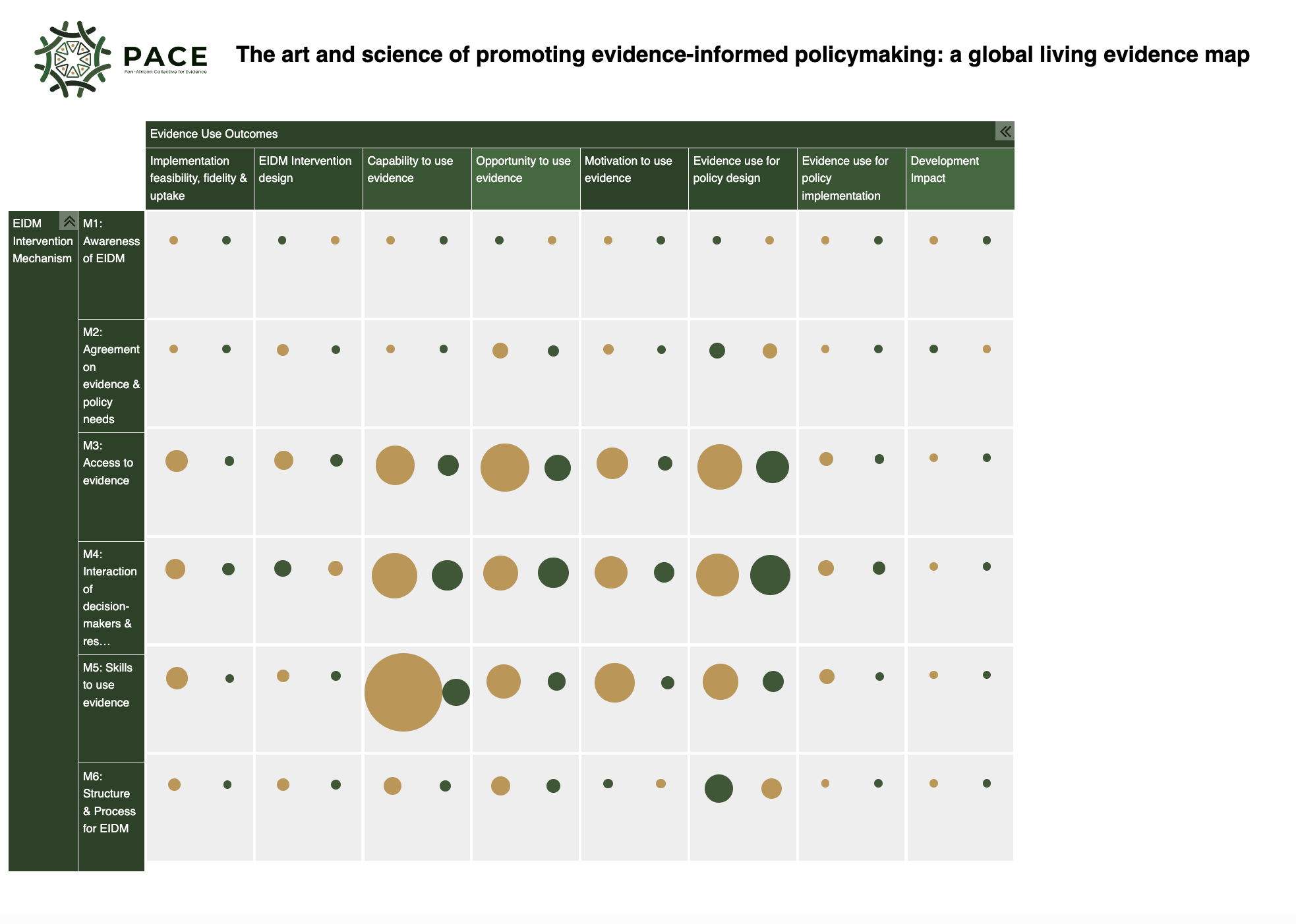

The map is structured using an intervention-outcome framework. The intervention axis groups evidence-use interventions by their underlying mechanisms of change, while the outcome axis presents intermediate to downstream outcomes of evidence use. Bubble size indicates the size of the evidence base. Gold bubbles correspond to evidence documenting scientific inquiry (the science of using evidence), while green bubbles denote tacit, practice-based learning (the art of using evidence). This provides a broad picture of the field, while also making clear where stronger empirical testing and more consistent reporting are still needed.

Art and Science of Promoting Evidence-Informed Policymaking: A Global Living Evidence Map

Because there is no single agreed-upon overarching theory for how evidence-informed policy-making happens, the map applies and refines an established conceptual framework to group interventions into six mechanisms of change:

The framework also uses capability, opportunity, and motivation as intermediary outcomes, which empirical evidence demonstrates are important correlates of evidence use. The map can be filtered (for example by region, sector, or study design), and a short video orients new users on how to navigate the tool.

On the outcome axis, the map distinguishes between primary outcomes (evidence use and socioeconomic impact) and intermediary outcomes that enhance the likelihood of evidence use (capability, motivation, opportunity, and implementation fidelity).

A practical way to use the map is to start with a mechanism, scan across outcomes to see what has been measured, and then use filters to narrow to the setting, sector, or study designs most relevant to your question.

What the evidence base looks like

One of the key messages of the map is that the evidence base on strategies to strengthen evidence use is substantial and growing. The field has expanded rapidly over the past decade, with more than half of the included studies published after 2015. While most studies have been conducted in the global north, there has been a notable recent increase in research from global south-country contexts.

At a high level, the evidence base clusters on approaches that strengthen interaction between evidence producers and users, improve access to evidence products and services, and build skills for engaging with evidence. Studies frequently measure intermediate outcomes, including capability, opportunity, and motivation to use evidence, alongside evidence use in policy design. Fewer studies follow the full pathway through to final outcomes and broader impacts, and the evidence base is not evenly distributed across sectors and settings (with strong representation from health and many studies from high-income contexts).

This matters for policy-making in practice: funders and implementers regularly face choices about where to allocate resources (new evaluations versus evidence synthesis), which mechanisms to test, and how to avoid duplication. A living evidence base can help ground those choices in what is already known and what is not yet well understood.

Where additional knowledge is needed

Even with a growing evidence base, important questions remain because studies vary in what they measure, where they are conducted, and how consistently they define “evidence use”. Key gaps include:

These align with a set of practical questions that many funders and research communities face: Are we drawing on the best available evidence when allocating resources to new EIPM interventions? Do we know where new research has the potential for the most impact in advancing the knowledge base? And do we know how to help researchers, brokers, and policy-makers access the latest evidence on evidence use?

Conclusion

Taken together, the map demonstrates that the evidence base on strategies to strengthen evidence use is substantial and growing, but unevenly distributed and still limited in places by gaps in outcomes measured, contexts covered, and consistency of concepts and indicators.

As a living evidence base, the map is intended to support the accumulation of knowledge in the field. It helps researchers design new studies with a clearer view of what has already been tested and where key uncertainties remain. It also helps funders and implementers see where evidence is dense versus thin, and where additional knowledge could most improve policy-making. Over time, the aim is to make it easier to build on existing learning, avoid duplication, and target research and investment where it adds the most value.